First and foremost, while I will not claim detailed knowledge in the area, the food industry is one of the most regulated industries anywhere, and with good reason. Most governments have a vested interest in ensuring the populace is not poisoned, leading to the following, illustrative statement from the FDA (emphasis mine):

More than 3,000 state, local and tribal agencies have primary responsibility to regulate the retail food and foodservice industries in the United States. They are responsible for the inspection and oversight of over 1 million food establishments – restaurants and grocery stores, as well as vending machines, cafeterias, and other outlets in health-care facilities, schools, and correctional facilities.

For example, in California I immediately found a 105-page document describing how refrigeration units in food retailers shall be drained, staffed, entered and who should have access to them. Access was specifically limited to “only those essential in the handling of food products”, with enumerated exceptions.

There is little profit in messing with these regulations. It is a tangled web between federal, state, local and tribal agencies that will likely not end well. It may be possible to reconstruct some of the Whole Foods stores to have a separate area that may not be counted as a grocery store, but it is not probable this is the first thought Amazon has in the subject matter. It’s likely the main contribution Whole Foods has to the edge cloud notion is that they have leased or purchased 400 locations. For this reason alone, I doubt they would begin their assimilation of Whole Foods with mini data centers.

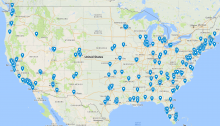

For the sake of analysis, though, let’s assume that they are, and will attach a mini data center to let’s say the roof of the store. This would give them 440-odd points of presence around the continental USA (and three in HI, the only ones that might actually make sense as an edge data center). The main issue that these have, from an edge compute standpoint, is that they are very geographically concentrated into certain urban centers.

The map above(also linked as interactive) shows a zoomed view of Whole Foods compared to Walmart. I use that comparison on the assumption that Walmart, as a national retailer, will have a store in most places it is profitable. Note that, compared to Walmart, Whole Foods’ coverage is practically focused on a few coastal areas, and then incidental to some major cities along the way, but has massive areas where they are comparatively few or far apart (such as the entire region between Minnesota and Washington).

But, the astute might ask, wouldn’t it make sense in an urban center anyway? Certainly, there may be millions of devices in the city of Philadelphia, that these nine Whole-Foods-Turned-Datacenters can divide up between them for some computational Nirvana? This is where we have to turn to network topologies to see how these things work.

If you are on the same network, for example, Comcast, it is possible to take the shortest logical path between two devices, going up to the nearest shared router, and back again. However, if the two devices are on different networks, for example, Comcast and AT&T, very often the data needs to go to one of the internet exchange points (or IXPs) pictured above. These are peering locations, where traffic flows between different provider networks. Not all traffic needs to go to the public ones; network providers with special agreements can make their own peering points, but this is a good rule of thumb.

Against such a backdrop, the fact that there are nine edge centers in Philadelphia matters little, as they would all need to go through the only IXP in Philadelphia, assuming that the providers even choose to interconnect there. Bubley addresses this with an edit, adding:

One other thing to consider here is how they go from a local data-centre to the network. It may need local break/out in, which telcos often avoid doing. Or it could be that Amazon builds its own local wireless networks, eg using LoRaWAN for smart cities, or even gets CBRS licences for private localised cellular networks.

Indeed, that is one thing to consider. As he notes, operators are often wary of doing such things. Apart from possibly undercutting their own offering, there both technical and business reasons to avoid this. For one, the high level of abstraction provided by the IXPs helps keep the routing tables of routers in a network simple; they know a few limited sets of destinations and then a default route; the digital equivalent of saying “this is someone else’s problem”. With local breakout, now a router needs to suddenly behave differently. It also takes time and money to set this up, which is part of why CloudFlare, which calls itself “dedicated to peering” last year celebrated joining their 100th peering point. It takes time and effort. Now do it times four hundred.

Leaving fixed networks for a while, there are additional hurdles when it comes to peering on mobile networks, and that is entirely related to how these networks are built and designed. Mobile operators spend quite a large amount of money on having internal protocols in their networks specifically so that we can continue to use data despite the fact that the route the packets need to take to our cellphones changes very often, and as we travel. The picture above shows the FCC registered cell phone towers (not all towers need to be registered) in Miami, FL. A lot of towers are needed for cell phone coverage.

Between those towers (indeed, even possibly over WiFi and other technologies), cellphone operators use tunnelling protocols to carry packets through their networks. These tunneling protocols wrap the IP packets that the device is sending, and shunt them through the local backhaul networks above into central data centers. This forms the “Packet Core“, the central piece responsible for ensuring that cellular devices get connectivity.

The key take-away from this is that the mobile traffic only turns into internet traffic once it reaches the so-called PGW. This is the node that is responsible for assigning an IP number for the phone and allowing it to route out to the bigger network. PGWs are obviously not deployed everywhere; it might be one or more per city, but operators generally try to form a hierarchy on their network to make it manageable.

Bubley further notes that Amazon could solve this by building its own network. They probably could, but that seems unlikely. While there are rumblings on the MulteFire front, this may, just like Google Fiber, be a play to get a cheaper price for existing services rather than put up a labor- and capital intensive network spanning continents.

No. I would say it is unlikely Whole Foods plays into an edge datacenter model, or that their points of presence as local datacenters played any part in the thought behind the acquisition.

However, should Amazon truly go pushing into the edge datacenter model, I would expect to find the first signs not on the infrastructure part but on the software development front. Edge computing is more than a game of “if you build it, they will come”. There is a large, unresolved question on how to build it in the first place, and building a network expecting one type of traffic, while receiving another, is by now a fabled recipe for disaster.